The cognitive remainder

Why AI breaks the historical case against technological unemployment

I’ve lost track of how many times I’ve read an article on AI that turns to history to explain why everything’s going to be just fine. Greg Ip’s recent piece in the Wall Street Journal, “Tech Has Never Caused a Job Apocalypse. Don’t Bet on It Now.”, may be well intentioned—after all, we could all do with some reassurance—but it’s also wrong. Not in its optimism, but in its foundations: the data is too early and the analogies are categorically broken.

Let’s examine the first piece of evidence offered by Ip:

The ranks of software developers, widely assumed to be acutely vulnerable to AI, are up 5% in January from a year earlier, a pace largely consistent with the past 23 years. That’s according to Labor Department data analyzed by James Bessen, executive director of the Technology and Policy Research Initiative at Boston University.

The number of computer programmers, who assist developers in ensuring code runs properly, was down slightly in the last year, in line with a secular decline in place for decades. Neither trend shifted much after ChatGPT’s arrival in late 2022.

There are several issues here—not least his curious distinction between “computer programmers” and “software developers”. But most importantly, his timeline is completely wrong.

When ChatGPT was introduced in late 2022, it was of limited practical use to software developers. GPT-3.5, which was the foundation model used by ChatGPT at that time, scored a measly 0.4% on SWE-Bench Verified, a widely used benchmark for software engineering that measures models’ ability to solve real-world GitHub issues. It wasn’t until the beginning of 2025 that frontier models began surpassing the 50% mark, and by late 2025 that they hit 80%.

Cursor, one of the first AI coding agents to be broadly adopted, grew from a user base in the thousands in mid-2024 to over 1 million by April 2025. Anthropic’s Claude Code, widely regarded as the best coding agent, was released to the public in May 2025. An initial research preview of OpenAI’s agent, Codex, was released the same month.

If, then, the real inflection point for agentic coding was sometime last spring, we shouldn’t expect to see it in employment figures yet. The hiring decisions that produce those numbers were made well before these tools matured. Ip’s 5% figure tells us something about 2024. It tells us very little about what’s coming.

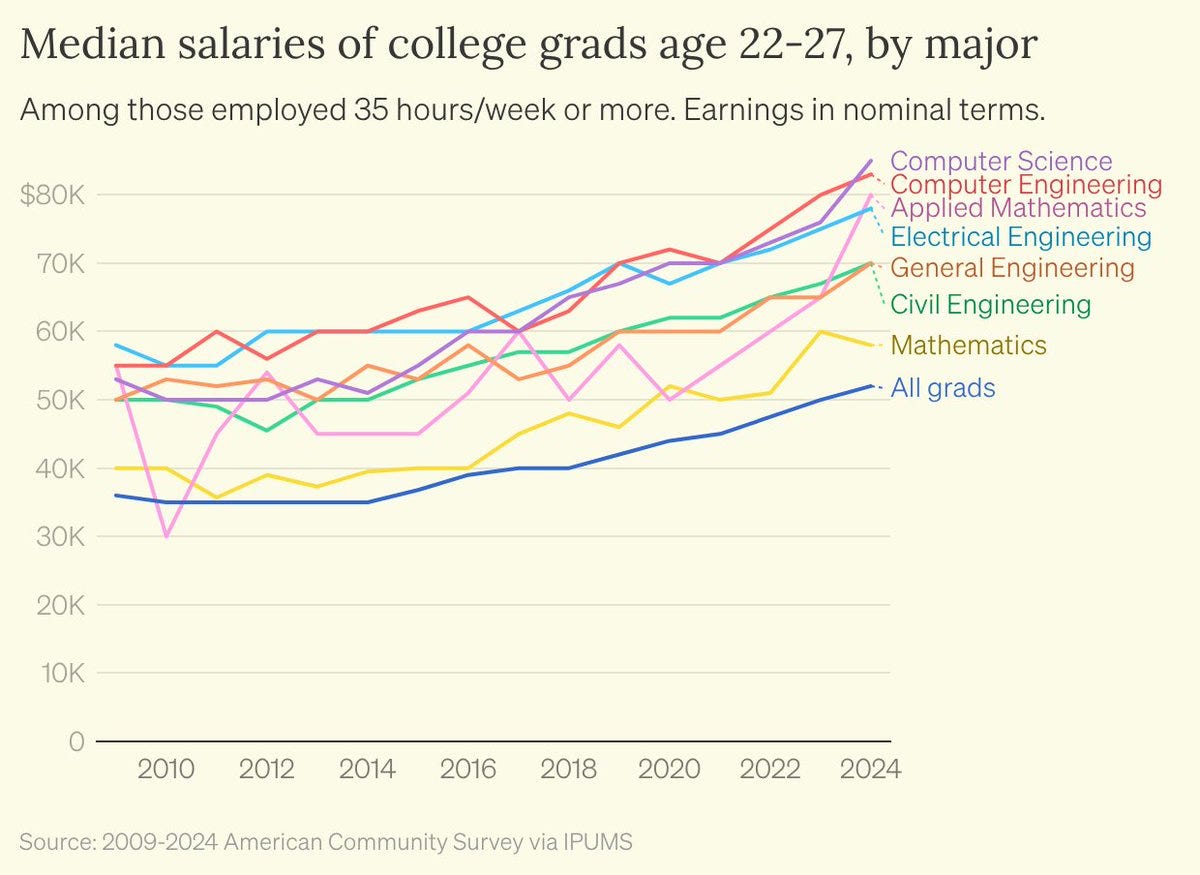

To understand what’s coming, at least in the context of employment, we should consider the impact on entry-level hiring for these roles. Ip argues that “competition from AI isn’t forcing computer scientists to take pay cuts” by citing survey data from the Institute for Progress. In his words, “In 2024, the median young computer science graduate earned 63% more than the typical young graduate, up from 47% in 2009”.

First, this data is two years out of date. Second, Computer Science is one of the most selective undergraduate programs in the United States, commanding a wage premium that predates AI. But most importantly, this is a conditional statistic: it tells you what employed CS graduates earn, not what happened to those who didn’t get hired. If the first workers displaced by agentic tools are the weakest new entrants, the remaining employed pool skews toward higher earners and the premium looks healthy while the denominator quietly shrinks. This is what statisticians call survivorship bias.

Ip then cites a study at Stanford by Erik Brynjolfsson:

Employment of 22- to 25-year-olds in the most AI-exposed occupations such as software developers and customer-service agents fell 6% in the three years after the introduction of ChatGPT while that of older workers and workers in unexposed occupations rose.

But some critics say the drop could be explained by other factors, such as rising interest rates, that predate ChatGPT. Job postings for software developers jumped in the wake of the pandemic, then started to fall in early 2022, according to Indeed Hiring Lab.

The 6% he uses is the raw absolute employment decline for 22–25 year olds in the most exposed occupations. The researchers, after controlling for firm-time effects—including interest rate sensitivity—found that young workers experienced a 16% relative employment decline in the most exposed occupations. Ip instead relies on the paper’s weakest, least controlled statistic, while ignoring the more robust estimate the authors constructed to address the very alternative explanation he uses to dismiss it. The researchers concluded that despite other factors, the data suggests that “generative AI has begun to affect entry-level employment significantly.”

James Bessen, who Ip refers to throughout the piece, cited an 11% increase in business software spending as evidence that demand for software is elastic enough to offset displacement—without noting that this figure almost certainly includes spend on the AI tools doing the displacing. Even the portion that isn't going to AI directly may reflect companies preparing their infrastructure for AI.

But setting that aside, the analogies he and Ip reach for to make this case reveal a more fundamental problem:

This, Bessen notes, is in line with previous technological advances that drive prices down and demand up enough to offset direct job displacement. His examples include textile manufacturing in the 19th century, and the spread of ATMs in the 1980s.

My favorite example: As the number of bookkeepers shrank with the introduction of spreadsheet software in the early 1980s, the number of accountants and financial analysts newly empowered by Lotus 1-2-3 and Excel rose even more.

These analogies reflect a broader tradition within economics of reflexively countering what the profession calls the “Luddite fallacy”—the assumption that technology destroys jobs in aggregate. The counter-argument has been so consistently vindicated by history that it has hardened into a fifth law of thermodynamics: technology displaces some workers, but the offsetting mechanisms always compensate.

The bookkeeper-accountant analogy only works if you accept that automation attacks the mechanical layer and leaves the cognitive layer intact. That is the assumption carrying much of the historical case against technological unemployment. AI represents a serious challenge to that assumption since it is the automation of cognitive work itself—an important distinction that many commentators who seek comfort in history consistently ignore.

Not only can AI build spreadsheet software, it can create spreadsheets, analyze data, and synthesize it to make decisions. That is not just automation of a single role, but an entire value chain. The junior analyst pulling the data, the mid-level analyst building the model, the senior analyst synthesizing the output, the VP making the call. AI is becoming a plausible replacement for all four, not just the first.

If AI can perform meaningful parts of the interpretive and synthetic work that was exclusively human territory, then the offsetting mechanisms economists rely on to refute the Luddite fallacy may prove weaker this time around. Not because history tells us nothing, but because the underlying thing we’re automating is different.

I’m not arguing that we will necessarily experience a “job apocalypse”—I actually think it’ll be slower and quieter than that. And, to briefly agree with Ip, I could see some professions demanding human service regardless of how capable AI becomes because people prefer to interact with other people. Where we differ is in the faith we have in the labour market to self-correct. That faith has been earned by two hundred years of history. The question is whether it survives contact with a technology that is, for the first time, attempting to automate knowledge-based work as we know it. Software engineering is simply the first industry where the tools have matured enough to make this visible.